Dyno Robotics

Roboguide

Social guide robot for museums, science centers and shopping malls

my ROLE

Interaction Designer, HRI Researcher, Fascilitator, UX/UI, Motion Graphics

Timeline

3.5 years

2022-2026

project TEAM

Daniel Engelsons, Daniel Purgal, Fredrik Löfgren

Overview

Making social guide robots more approachable

Roboguide is a social guide robot used for guiding, entertainment and education in public spaces. Over 3.5 years, I helped shape its expressive behavior, client co-design process, affect-driven personality system, and product UX as it evolved from early prototype to deployed scaleable platform. The project is still ongoing, with current work focused on scaling customization and productizing the system behind the robot.

Overview

Making social guide robots more approachable

Roboguide is a social guide robot used for guiding, entertainment and education in public spaces. Over 3.5 years, I helped shape its expressive behavior, client co-design process, affect-driven personality system, and product UX as it evolved from early prototype to deployed scaleable platform. The project is still ongoing, with current work focused on scaling customization and productizing the system behind the robot.

Expression Design

Designing an expressive robot character

Roboguide needed to do more than answer questions. In public spaces, people had to quickly understand whether the robot was listening, thinking, speaking, or ready to respond. My role was to design the expressive layer that made those states visible through facial animation, speech sync, and turn-taking cues.

Objectives

Implement interaction cues that supported natural turntaking

Enable context aware emotion sequences

Make mouth sync to generated text to speech

Expression system

The robot’s face ran inside an Android app on the robot hardware. I made a library of animated facial sequences for different dialogue states and emotions, which were triggered in context and exported for real-time playback. Mouth was synced to speach by designing a fixed set of mouth positions that were ID:d through universal visemes.

Set of emotions on Elsa, the first deployed robot

Learnings from first deployment

Users struggled with differentiating natural verbal pauses in long answers from "Listening" state, causing them to frequently interrupt the robot

Users were at times unsure if the robot was hearing what they were saying

In between interactions, the robot needed idle movement to indicate readiness to talk

Changes

Progress bar to indicate how long the robot's answer was going to be, which resulted in much less frequent interruptions

We clarified how much the robot was hearing by implementing a soundwave animation that reacts to volume level

A set of idle animations, such as blinking and looking around, were set to play in random intervals to reduce stillness when no one was talking.

Curio's face animations for Doseum in San Antonio, Texas

Expression Design

Designing an expressive robot character

Roboguide needed to do more than answer questions. In public spaces, people had to quickly understand whether the robot was listening, thinking, speaking, or ready to respond. My role was to design the expressive layer that made those states visible through facial animation, speech sync, and turn-taking cues.

Objectives

Implement interaction cues that supported natural turntaking

Enable context aware emotion sequences

Make mouth sync to generated text to speech

Expression system

The robot’s face ran inside an Android app on the robot hardware. I made a library of animated facial sequences for different dialogue states and emotions, which were triggered in context and exported for real-time playback. Mouth was synced to speach by designing a fixed set of mouth positions that were ID:d through universal visemes.

Set of emotions on Elsa, the first deployed robot

Learnings from first deployment

Users struggled with differentiating natural verbal pauses in long answers from "Listening" state, causing them to frequently interrupt the robot

Users were at times unsure if the robot was hearing what they were saying

In between interactions, the robot needed idle movement to indicate readiness to talk

Changes

Progress bar to indicate how long the robot's answer was going to be, which resulted in much less frequent interruptions

We clarified how much the robot was hearing by implementing a soundwave animation that reacts to volume level

A set of idle animations, such as blinking and looking around, were set to play in random intervals to reduce stillness when no one was talking.

Curio's face animations for Doseum in San Antonio, Texas

Controlling Personality

Turning robot character into a controllable system

As Roboguide moved into more open-ended conversations, expressive animations alone were not enough to maintain a coherent character. In my master’s thesis, I explored how personality traits, narrative framing, and internal affect could be turned into a system that shaped both what the robot said and how it expressed itself.

What I explored

An internal emotion system: Shaping the robot’s reactions by what was happening in the conversation

Continuous emotion: More consistent and sequencial "moods" instead of fixed sequences

Backstory layer: Giving the robot a clearer identity rather than a generic assistant.

I added a closed loop to the system so that each interaction could shift the robot’s internal mood. That mood then shaped both the facial expression and the wording of the next response, making the character feel less static over time.

New system structure for emotional awareness

This enabled the internal emotion system to simulate continuous shifts in emotion and mood, and update the facial expressions and verbal tone accordingly. This work moved Roboguide from surface-level expression toward a more coherent character system.

The emotion system's mapping to the face expression

Results

Feeding the robot a backstory made the robot behave more like a living character. People engaged with it more curiously and personally

The system enabled automatic animation, saving time in producing each sequence by hand

The system made emotion patterns easier to tune and inspect

Controlling Personality

Turning robot character into a controllable system

As Roboguide moved into more open-ended conversations, expressive animations alone were not enough to maintain a coherent character. In my master’s thesis, I explored how personality traits, narrative framing, and internal affect could be turned into a system that shaped both what the robot said and how it expressed itself.

What I explored

An internal emotion system: Shaping the robot’s reactions by what was happening in the conversation

Continuous emotion: More consistent and sequencial "moods" instead of fixed sequences

Backstory layer: Giving the robot a clearer identity rather than a generic assistant.

I added a closed loop to the system so that each interaction could shift the robot’s internal mood. That mood then shaped both the facial expression and the wording of the next response, making the character feel less static over time.

New system structure for emotional awareness

This enabled the internal emotion system to simulate continuous shifts in emotion and mood, and update the facial expressions and verbal tone accordingly. This work moved Roboguide from surface-level expression toward a more coherent character system.

The emotion system's mapping to the face expression

Results

Feeding the robot a backstory made the robot behave more like a living character. People engaged with it more curiously and personally

The system enabled automatic animation, saving time in producing each sequence by hand

The system made emotion patterns easier to tune and inspect

Client alignment

Turning robot design into a repeatable process

Not every client needed the same robot. Different venues needed different levels of warmth, playfulness, authority, and visual identity.

Different venues need different expressions

In our first deployments i designed a a workshop-based process that helped clients define who their robot should be, and helped our team turn those decisions into a coherent implementation.

Co-design workflow with client

Positive effects

The workshop helped the clients' stakeholders align their goals with the robot within their organization

Clients could feel ownership and influence over a design that would become a core part of their venue

We as developers could learn about their customers needs from people who meet them daily

Discovered pain points

The workshops required a lot of time and resources

Decision-making during sessions was often long and inconclusive

Participants didn't always have the data or experience we needed, making it harder to pinpoint their actual needs and wants on the spot

Idea

Could I develop a tool that helps decision making and lets clients design their own robot in their own pace?

To reduce workshop overhead and make customization more accessible, I’m currently translating this process into a component-based visualization tool. The goal is to let clients explore and modify robot personality and appearance direction more directly at a detail level that suits their needs, while keeping the design system coherent.

Interface in progress to adjust and visualize foiling in real time

Interface in progress to adjust and visualize the facial interface in real time

Client alignment

Turning robot design into a repeatable process

Not every client needed the same robot. Different venues needed different levels of warmth, playfulness, authority, and visual identity.

Different venues need different expressions

In our first deployments i designed a a workshop-based process that helped clients define who their robot should be, and helped our team turn those decisions into a coherent implementation.

Co-design workflow with client

Positive effects

The workshop helped the clients' stakeholders align their goals with the robot within their organization

Clients could feel ownership and influence over a design that would become a core part of their venue

We as developers could learn about their customers needs from people who meet them daily

Discovered pain points

The workshops required a lot of time and resources

Decision-making during sessions was often long and inconclusive

Participants didn't always have the data or experience we needed, making it harder to pinpoint their actual needs and wants on the spot

Idea

Could I develop a tool that helps decision making and lets clients design their own robot in their own pace?

To reduce workshop overhead and make customization more accessible, I’m currently translating this process into a component-based visualization tool. The goal is to let clients explore and modify robot personality and appearance direction more directly at a detail level that suits their needs, while keeping the design system coherent.

Interface in progress to adjust and visualize foiling in real time

Interface in progress to adjust and visualize the facial interface in real time

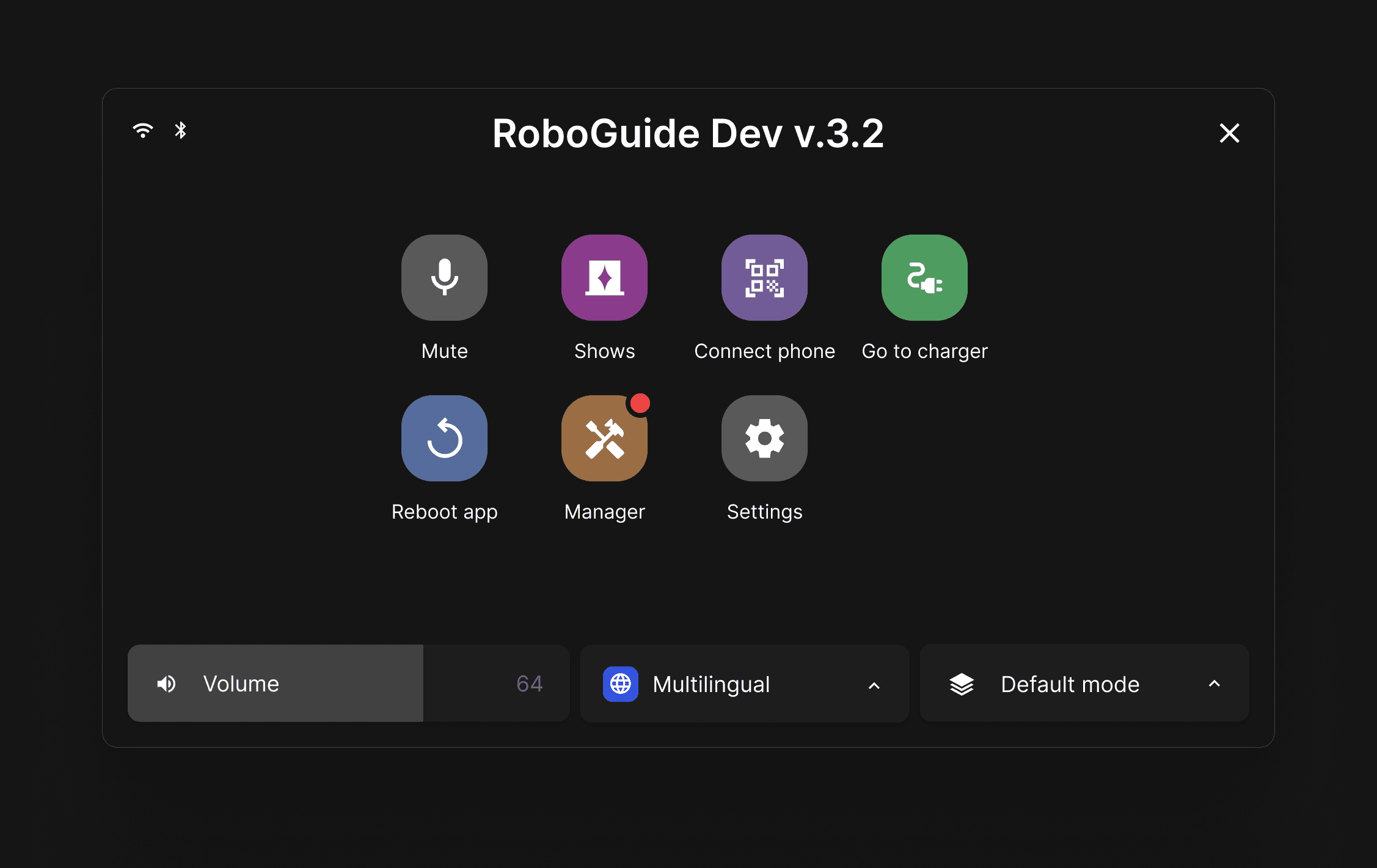

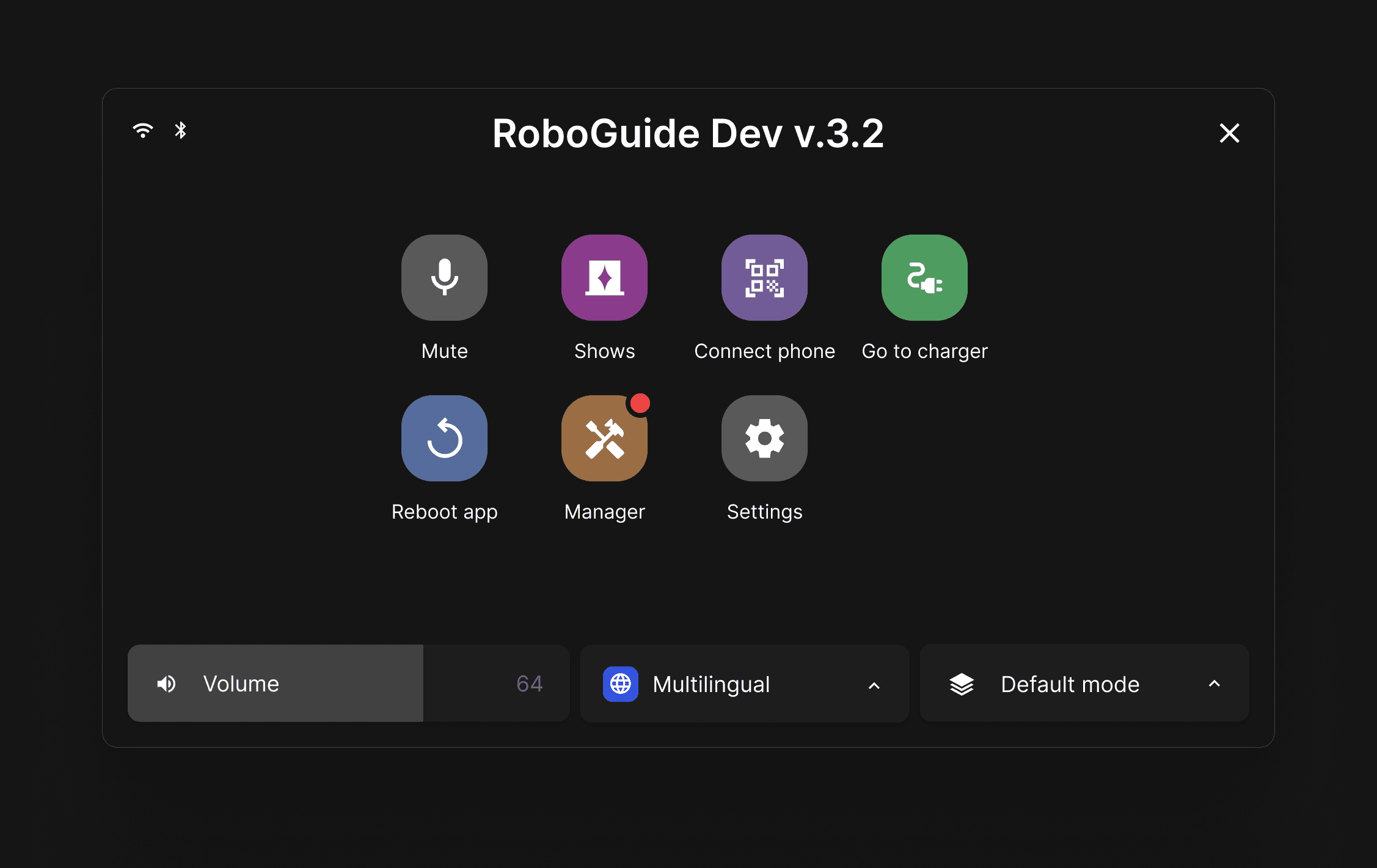

Operational UX

From prototype to usable product

As Roboguide matured, the challenge expanded beyond how the robot behaved in public to how it was operated behind the scenes. I’m currently redesigning the operational interfaces to make the system feel less like a development prototype and more like a intuitive product for staff and clients.

0

1

On robot controls

Redesigning the settings and manager menus used for quick changes such as language, modes, shows, and outward behavior.

0

2

Staff-facing operations

Improving the tools used to handle the robot in day-to-day use, with clearer flows and easier introduction.

0

3

Client portal

Designing a more product-ready interface for managing content, scripts, knowledge, settings, and robot data.

The goal is not only to polish the visuals, but to make the whole platform easier to understand, operate, and scale. As the product grows across deployments, these interfaces need to support both everyday use and long-term maintenance.

What needed to change

Several tools still reflected how the system had evolved internally, rather than how staff and clients naturally expect to use it.

Different touchpoints served different users, but lacked a more coherent structure and product feel.

As more robots were deployed, configuration, content handling, and support needed to become easier and more consistent.

Current work

The on-robot manager interface is one of the first touchpoints I’m redesigning. The goal is to make quick operational tasks (such as changing language, shows, modes, and outward behavior) easier to understand and faster to perform in context.

Old interface

New Interface

Main changes

From long, scroll-dependent interface to a single-screen overview

More recognizeable task-based actions at glance

Moved less-used controls into settings and dropdowns to reduce clutter

This phase is still in progress, but it marks an important shift in the project: from designing the robot’s behavior to designing the product ecosystem around it.

Operational UX

From prototype to usable product

As Roboguide matured, the challenge expanded beyond how the robot behaved in public to how it was operated behind the scenes. I’m currently redesigning the operational interfaces to make the system feel less like a development prototype and more like a intuitive product for staff and clients.

0

1

On robot controls

Redesigning the settings and manager menus used for quick changes such as language, modes, shows, and outward behavior.

0

2

Staff-facing operations

Improving the tools used to handle the robot in day-to-day use, with clearer flows and easier introduction.

0

3

Client portal

Designing a more product-ready interface for managing content, scripts, knowledge, settings, and robot data.

The goal is not only to polish the visuals, but to make the whole platform easier to understand, operate, and scale. As the product grows across deployments, these interfaces need to support both everyday use and long-term maintenance.

What needed to change

Several tools still reflected how the system had evolved internally, rather than how staff and clients naturally expect to use it.

Different touchpoints served different users, but lacked a more coherent structure and product feel.

As more robots were deployed, configuration, content handling, and support needed to become easier and more consistent.

Current work

The on-robot manager interface is one of the first touchpoints I’m redesigning. The goal is to make quick operational tasks (such as changing language, shows, modes, and outward behavior) easier to understand and faster to perform in context.

Old interface

New Interface

Main changes

From long, scroll-dependent interface to a single-screen overview

More recognizeable task-based actions at glance

Moved less-used controls into settings and dropdowns to reduce clutter

This phase is still in progress, but it marks an important shift in the project: from designing the robot’s behavior to designing the product ecosystem around it.

Impact

Robot as a Service

Roboguide has grown from an early expressive prototype into a deployed social robot platform used daily in public spaces. Across 3.5 years, I helped shape its character, behavior, client design process, and product direction.

So far, this work has contributed to:

7 deployed robots in Sweden and USA

Daily use in some of Sweden's biggest science centers and shopping malls

Awarded 1st place at Blooloop Innovation Awards 2025 in the Best Guest Journey Experience category

Personal takeaways

Roboguide became a strong example of how I want to work: combining interaction design, systems thinking, and product development to make emerging technology usable in the real world.

Designing the right robot required just as much stakeholder alignment and client understanding as interface and behavior design.

As the project matured, the focus shifted from just making the product functional to making the whole system operable, configurable, and scalable.

Real-world use exposed issues around timing, turn-taking, and clarity that no prototype could fully reveal.

A believable robot personality comes from how expression, behavior, and narrative support each other not from visuals alone.

Impact

Robot as a Service

Roboguide has grown from an early expressive prototype into a deployed social robot platform used daily in public spaces. Across 3.5 years, I helped shape its character, behavior, client design process, and product direction.

So far, this work has contributed to:

7 deployed robots in Sweden and USA

Daily use in some of Sweden's biggest science centers and shopping malls

Awarded 1st place at Blooloop Innovation Awards 2025 in the Best Guest Journey Experience category

Personal takeaways

Roboguide became a strong example of how I want to work: combining interaction design, systems thinking, and product development to make emerging technology usable in the real world.

Designing the right robot required just as much stakeholder alignment and client understanding as interface and behavior design.

As the project matured, the focus shifted from just making the product functional to making the whole system operable, configurable, and scalable.

Real-world use exposed issues around timing, turn-taking, and clarity that no prototype could fully reveal.

A believable robot personality comes from how expression, behavior, and narrative support each other not from visuals alone.

Previous project

Other projects

Next project